A Revolutionary Breakthrough in Computing Architecture

Researchers from the Polytechnic University of Milan have developed a groundbreaking intelligent chip capable of dramatically reducing energy consumption while accelerating the processing of massive data sets. By moving computation directly inside the memory, this innovation challenges the traditional computer architecture that has dominated for decades.

As artificial intelligence continues to fuel the race for computing power, energy efficiency has become a critical limitation. Today, the constant movement of data between memory and processor is one of the main obstacles to performance and sustainability.

To address this issue, the research team led by Professor Daniele Ielmini introduced a new approach based on analog in-memory computing.

Breaking the Von Neumann Bottleneck

Traditional computers rely on the Von Neumann architecture, where memory and processing units are physically separated. This design forces data to travel back and forth continuously, creating a major bottleneck that slows down operations and consumes enormous amounts of energy.

The new Italian chip takes a radically different path using In-Memory Computing. Instead of transferring data to a processor, computations are performed directly where the data is stored.

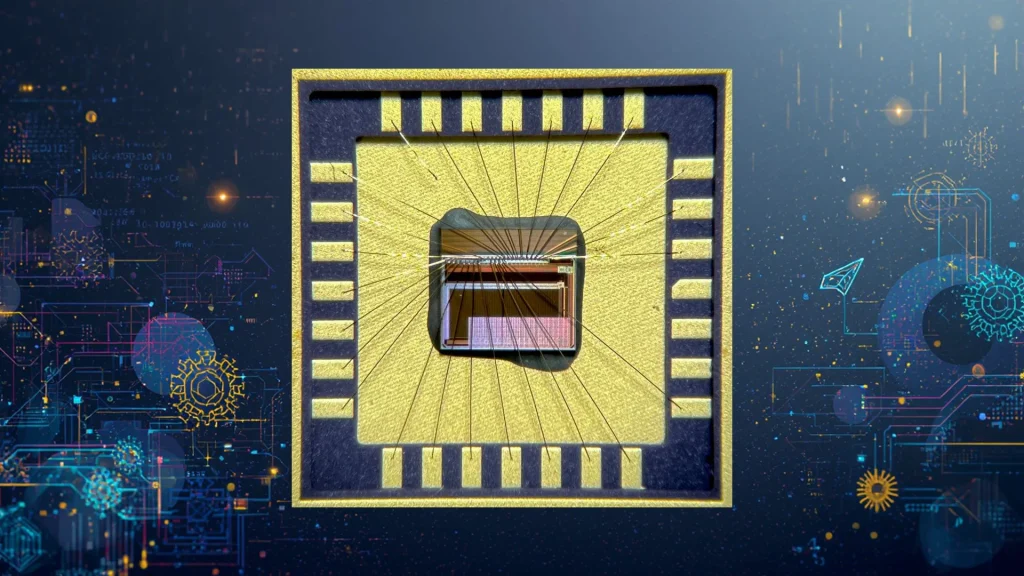

The chip is built around two 64×64 arrays of programmable resistive memory devices, organized in a grid-like structure. This configuration enables complex calculations without ever leaving the memory itself.

By eliminating internal data transfers, systems become both faster and significantly more energy-efficient, the researchers explain.

Stunning Performance and Energy Gains

Early test results are particularly impressive.

- Ultra-fast processing: computation times reach approximately 100 nanoseconds, remaining stable regardless of the problem size.

- Massive energy savings: the technology consumes up to 5,000 times less energy than state-of-the-art digital computers while maintaining comparable accuracy.

These gains highlight the enormous potential of analog in-memory computing for next-generation hardware.

Toward More Sustainable Artificial Intelligence

The project, named ANIMATE (ANalogue In-Memory computing with Advanced device TEchnology), addresses one of the most pressing challenges of modern AI: energy consumption.

Training large language models and operating AI-driven services require vast amounts of electricity. Today, data centers already account for nearly 1% of global electricity consumption, a figure expected to rise sharply.

By drastically reducing the energy needs of servers, this new chip could help slow the explosive growth of AI-related power demand and contribute to a more sustainable digital future.

Applications in 5G, 6G, Robotics, and Autonomous Systems

Beyond AI and high-performance computing (HPC), the chip is also expected to play a key role in future 5G and 6G networks. Its ultra-low latency (100 ns) makes it ideal for managing massive data flows in real time.

The technology is equally promising for robotics and autonomous vehicles. By enabling complex computations locally—without relying on cloud infrastructure—it provides the fast reaction times required for critical decision-making.

A Credible Alternative for the Future of Computing

This breakthrough from Milan represents a strong and credible alternative to conventional computing architectures. By combining speed, energy efficiency, and scalability, analog in-memory computing could redefine the foundations of AI, high-performance computing, and next-generation networks.

Ultimately, this innovation opens the door to a new era of sustainable and efficient computing, where performance no longer comes at the cost of excessive energy consumption.

🔗 Further Reading and Trusted Sources

To explore this topic further, consult these authoritative references:

- Politecnico di Milano research on advanced semiconductor technologies

- IEEE computing architecture research on data movement and energy efficiency

- IBM in-memory computing research on analog and neuromorphic systems

- MIT Technology Review on AI hardware and sustainable computing

- NVIDIA AI hardware research on next-generation accelerators

- TOON vs JSON for Large Language Models

https://geek-developer.com/toon-vs-json-for-llms/

This article provides a clear and structured analysis of how different data formats can impact LLM efficiency, interpretability, and performance. It is particularly useful for developers and researchers working on AI pipelines, prompt engineering, and data optimization for language models.

By examining alternatives beyond conventional JSON structures, the article encourages more thoughtful design choices when building systems around modern AI models.